Terraform - import aws_s3_bucket does not store important attributes like acl

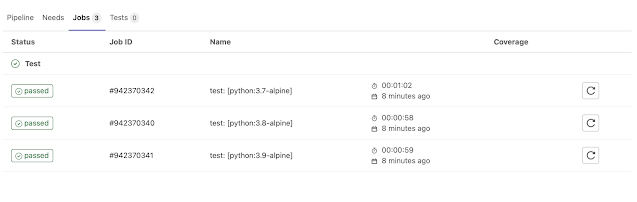

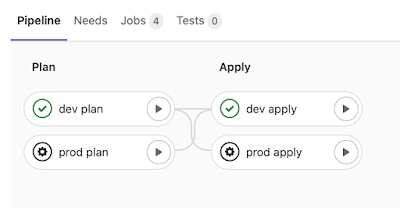

Recently, I had to import some AWS resources to terraform, and most things went smoothly, but some did not. More specifically, I have encountered this problem. And here is my reply how to deal with it now. In this post, I am going to be more elaborate about this issue. So, what exactly I have run into? Here is the code: Such bucket existed and I wanted to import this guy to terraform (the bucket was public). So, I typed terraform import 'aws_s3_bucket.my-bucket' 'my-bucket' and pressed enter: Wait, what? I understand the force_destroy argument (it is false by default), because I had not specified it, but acl ? I have two grant blocks... and according to the documentation , acl conflicts with grant . So, how is it even possible? 🤔 It was tempting to run terraform apply command... so let's do that! And what happened? Terraform (or should I say aws provider?) ignored these grant blocks and removed some ACL (Access control list) records from my bu...